Large Language Models (LLMs) are not the path to Artificial General Intelligence (AGI) no matter what Elon says. Anyone who has pre-trained, post-trained, or deployed LLMs in commercial settings knows this fact. It is what made my cofounder and me excited to start a domain specific LLM company.

Unless there's another Transformer ("Attention is All You Need" moment), we will have more and more specialized models moving forward.

When you move beyond the tech bro twitter world and read machine learning papers from a wide array of authors, two options start to present themselves as to how the world of specialized models will evolve over the next decade. The two options have dramatically different compute demand profiles.

One option will see the large growth of specialized models consuming every GW of power and GPU capacity built. The other will result in massive losses for data center builders in the short term, but create an even more interesting future.

Option 1: Massive Growth of Specialized LLMs (Transformer) Models

There will be a significant increase in the number of specialized models because Transformer (LLMs / AI) type breakthroughs are rare. The Transformer architecture first proposed in "Attention is All You Need" was built upon decades of machine learning, computer science, linguistics, etc., research. The possibility of another breakthrough equivalent to this is unlikely.

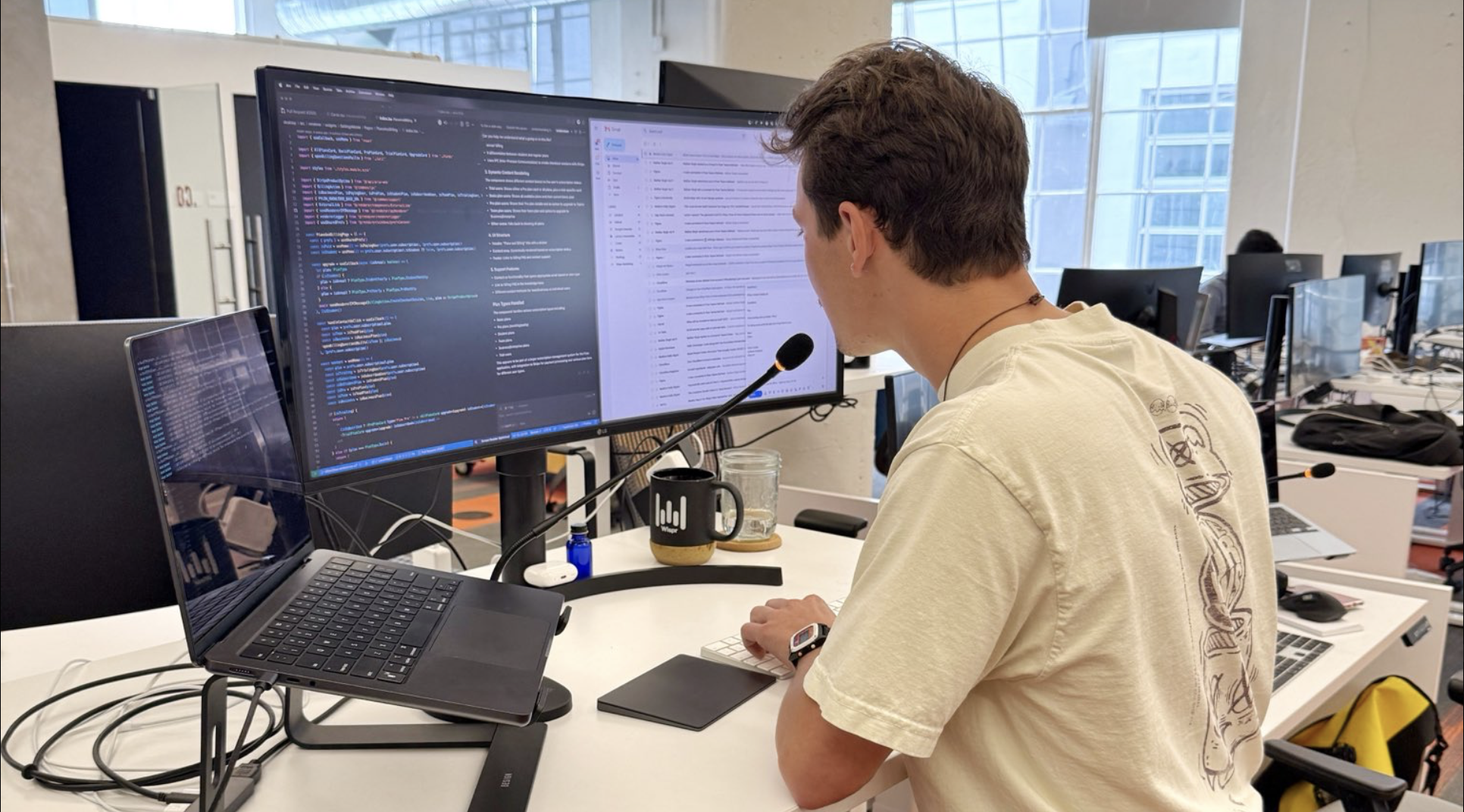

Given this constraint and a dramatic increase in the number of specialized models and domain specific solutions stitching together multiple specialized models is inevitable. It is now relatively cheap to create a domain specific model at or exceeding human level quality.

Speech-to-text is a great example for why specialized models will transform society. The human level quality of this model was the result of combining the Transformer architecture, domain specific data, in this case speech recordings, and GPU compute. The most interesting part is that the model is only 2 billion parameters. Compare this to GPT-4 which is estimated to have 1.8T parameters. A model 900 times the size can't even interpret a speech sound wave.

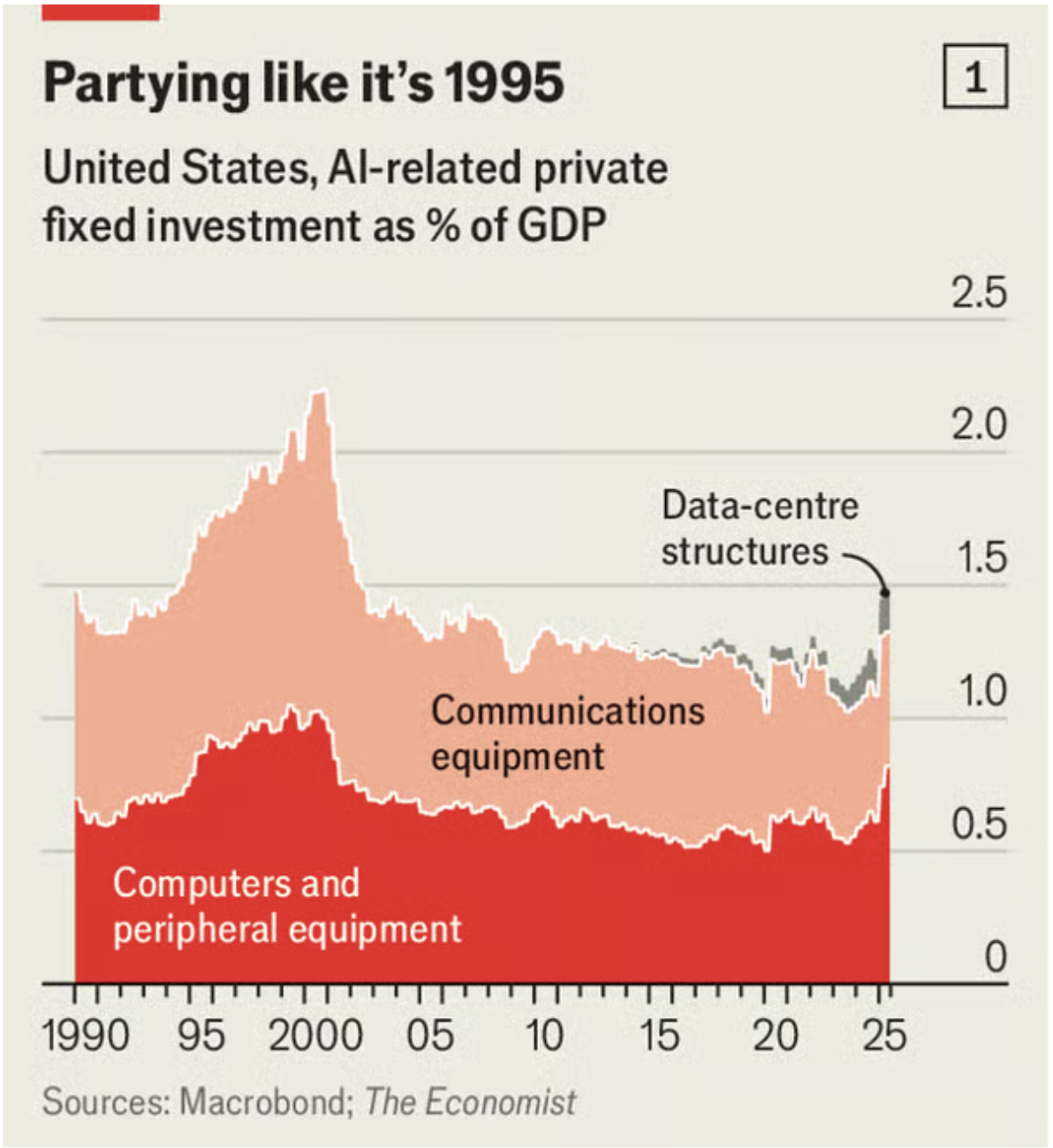

This recipe of Transformer, domain specific data, and compute will give us specialized products for every niche of our economy. As more and more effort is put into making the process of building hyper specific specialized models, the demand for compute to train and run these models every day will require the AI infrastructure datacenter buildout we are currently embarking upon and more.

Option 2: Algorithmic Black Swan Event

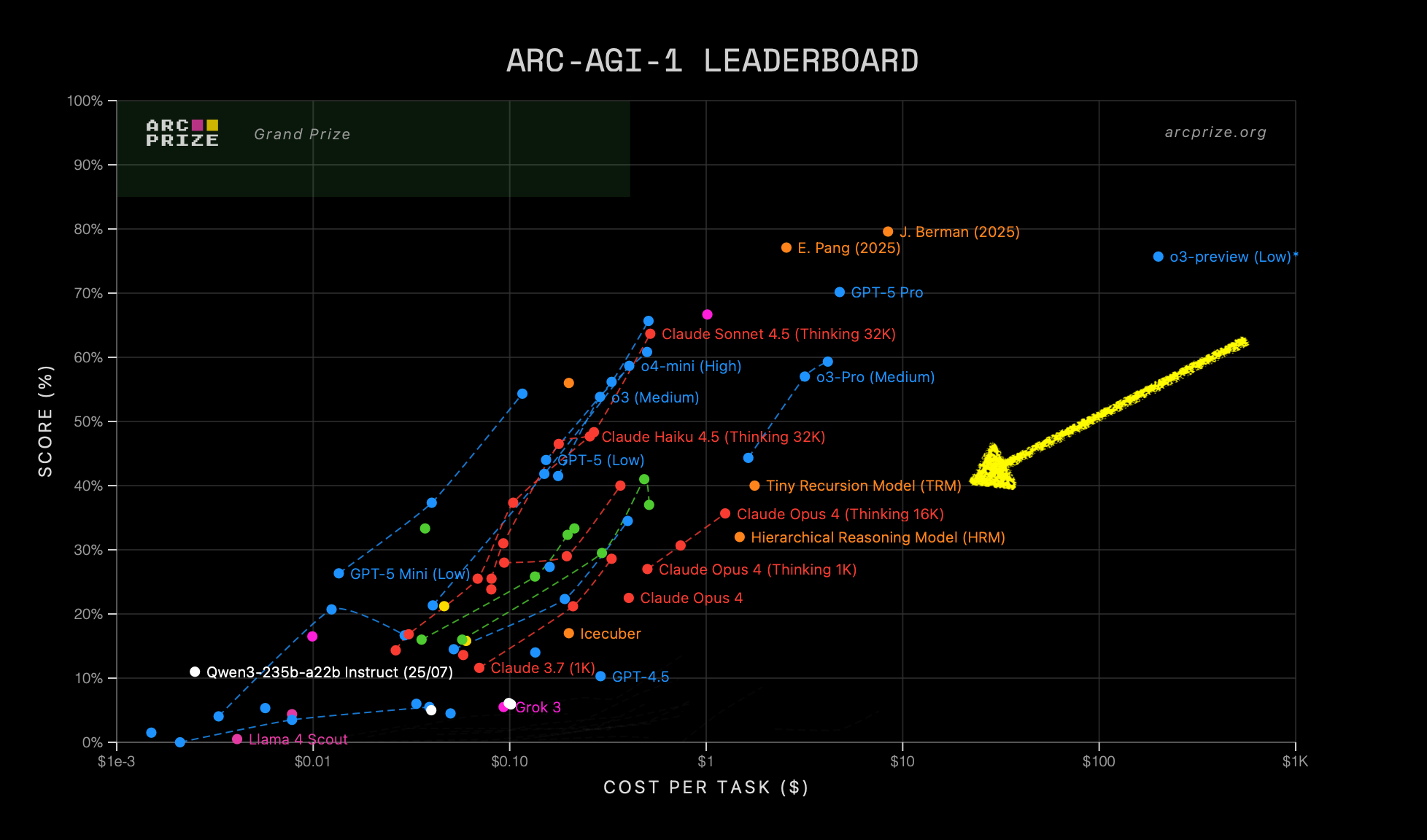

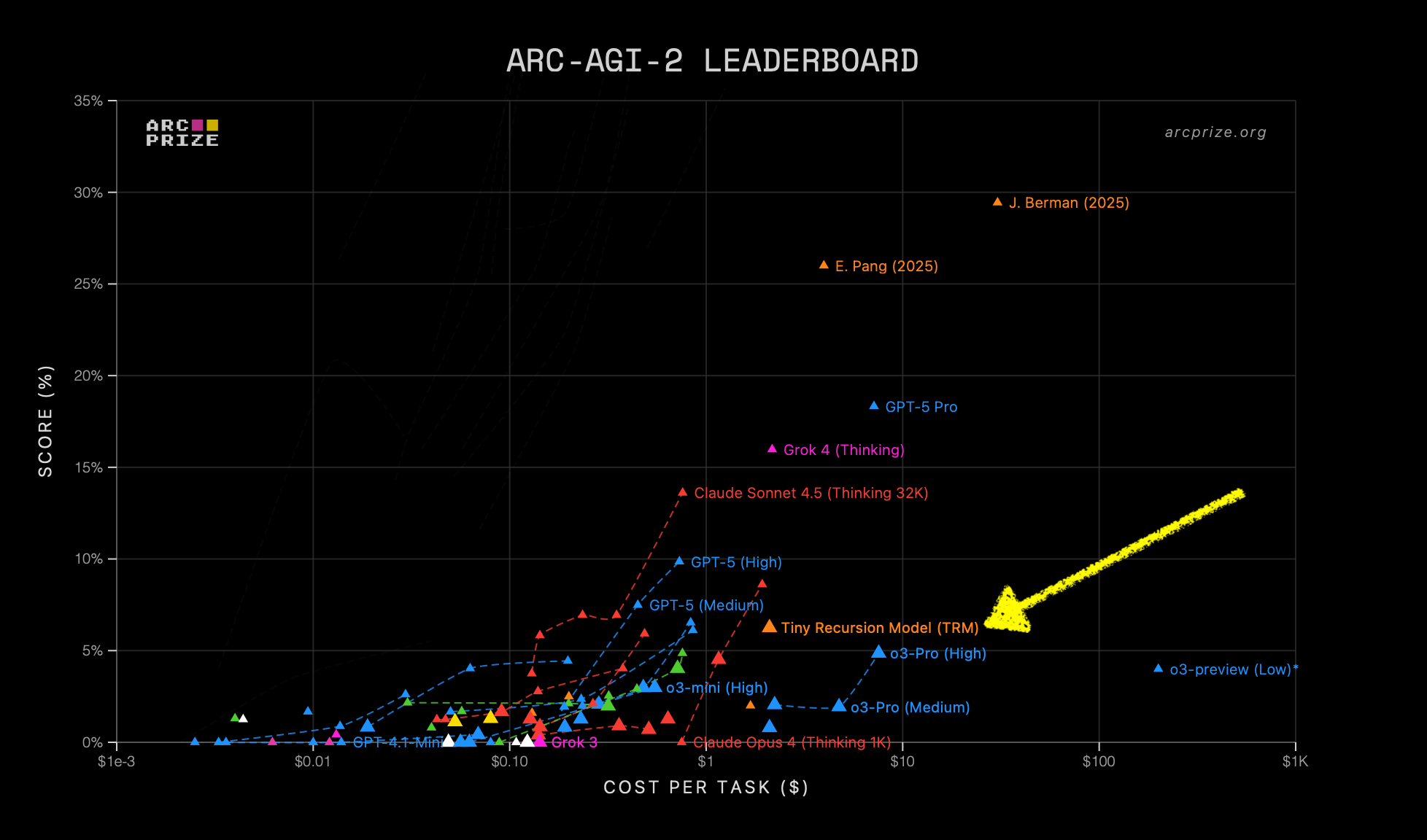

A model that's 900x smaller, like the speech-to-text example, is already incredible. Even within the current Transformer paradigm, we will continue to see more such parameter efficiencies.

The Black Swan event that folks should be concerned about is the possibility of an algorithm that doesn't reduce the model size by 900x, but instead by 10,000x.

The entire multi-trillion dollar "AI Infra Bubble" is built on the assumption of compute scarcity. The investment thesis for companies like NVIDIA, and the data center builders downstream, is that they are selling the scarce "picks and shovels" for an AI gold rush.

An algorithmic breakthrough like this is the "small pointy needle" that could cause significant short term shocks to public and private markets.

Now imagine a future where instead of only having ChatGPT, Gemini, Grok, and Claude, every individual can create, curate, and shape many intelligence systems that understand and adapt to their hyper personalized world. In that world, where the cost of customized intelligence approaches zero because of algorithmic breakthroughs, every processing unit would be buzzing all night long.

At Daedaline, we believe in either scenario, the world will move towards very specialized models which will become the standard for the most demanding domains.